I recently used AI to create a graphic for our newsletter and social media, and it backfired. While I thought it would be harmless to turn our Rigorous Raven into a Christmas-themed raven, using ChatGPT, the result spoke for itself and our advisors took notice. The Christmas Raven was clearly not created by a human, and in my excitement for the quick result, I failed to notice it.

That was, of course, not the first time someone in our team used Artificial Intelligence or Large Language Models. Most of C4R work does not involve the use of AI, but instances like this prompted our team to collaboratively work on an AI policy that would delineate, in detail, how and when we will use these tools to create our scientific rigor curriculum, its interactive activities, and our promotional and community-building content. In the spirit of full transparency, a cornerstone of rigor, we’re sharing that policy below.

Acronyms

The creation of our scientific rigor curriculum takes a village. C4R is funded by the NIH-NINDS (National Institute for Neurological Disorders and Stroke); our content is created in collaboration with teams of professors from universities across the United States, called METERs; and CENTER, a coordinating team of curriculum and software developers, project managers, advisors, and community engagement folks, led by our P.I., Konrad Kording.

Community for Rigor

Artificial Intelligence Use Policy

Approved on: February 13, 2026

Introduction

This document outlines the principles and guidelines for effective, ethical, secure and responsible use of Artificial Intelligence (AI) by the Community for Rigor (C4R), and specifically the CENTER grant recipient, CENTER PI, CENTER’s staff, METER grant recipients, METER PI(s) and their staff. This policy aims to provide greater transparency and guidance for authors, readers, reviewers, contributors, and end users of C4R’s materials and content.

The scope of this policy covers all C4R materials, including but limited to units and educational materials, all additional public-facing materials produced by C4R (e.g. user communication, promotional materials, user research materials, social media content, etc.), and materials or content that is not public-facing (e.g. outlines, interactivity activity prototypes, initial unit drafts).

Generative AI and AI-assisted technologies are increasingly being used through the research process, from synthesizing complex literature, data analysis, improving readability, and writing code, among others. However, C4R and its members must maintain the standards of AI use as decided and agreed upon by the C4R Steering Committee to ensure accuracy and value of our materials.

Principles of AI Use

As a part of the scientific community, C4R must understand how AI is used by scientists and communicate a standard for AI usage. However, AI is still a new technology that struggles with reproducibility, hallucinating references, misattribution, among other limitations. Because of AI’s limitations, C4R must hold itself to a collectively decided standard for AI usage reviewed and renewed annually by current C4R team members to ensure our products are trusted and human verified.

Additionally, C4R must present itself as a trustworthy enterprise to protect the reputation of its work. We believe in respecting the time and attention of the researchers, teachers, and students who make use of our materials. We do this by developing materials that advance the vanguard of thought in the field of scientific rigor and will endorse AI when it assists in the expression of that thought and reject it when it interferes with that thought.

To maintain the highest standard and reputation for our products and image, C4R will never present anything public facing that was generated by AI. However, AI can be used in the process of drafting and post-production of our materials, when human oversight is included and quality is validated thoroughly. If the potential for public viewing of materials exists, C4R will not use AI to generate those materials.

Our acceptable use cases broadly fall into the category of adversarial use, where AI is used to identify weaknesses or limitations of provided content, or places where that content diverges from established norms. For example, AI can be used to review an outline and provide suggestions for additional content, but the addition of information to the outline must be done by a human. We urge caution that some common use cases of AI may defeat the purpose of the activities they accomplish, e.g., reverse outlining material to skip having to read and understand that material.

When AI use is not adversarial, C4R will only use AI for coding, early brainstorming, and thought partnering. For example, AI can be used to conduct a literature search on a topic, but the sources must still be reviewed and validated by a human. AI can be used to extract data points from a figure intended to be reproduced, but a human must generate the new graph and confirm it accurately matches the original. AI can be used to generate ideas for social media content, but the final draft content must be written by a human.

The sections below identify key definitions and C4R’s use of terms, prohibited and permitted usages of AI, expectations for third party contractors, expectations of public use of C4R materials, and a set of criteria to determine if AI usage would be permissible in novel, unaccounted situations. Additionally, we provide a policy for transparent declaration of AI usage in C4R material production to accompany venues wherever materials are available to the public.

Definitions

Alter refers to the submission of an existing product and plain language prompt to an AI application to create a modified product.

Analyze refers to the submission of data and plain language prompts to an AI application to identify trends or perform statistical tests.

Drafting refers to the creation of content (text, image, video, audio, etc.) via submission of a plain language prompt to an application.

Extract means submission of a figure, image, or dataset and plain language prompt to an AI application to obtain estimates, values, or information contained therein.

Generate a product, X, refers to the creation of the product X, via submission of a plain language prompt to an AI application.

Partner means submitting one or more plain language prompts to an AI application, then iterating on application outputs through submission of additional plain language prompts, followed by expert review and validation.

Post-production refers to the submission of plain language prompts to an application to refine, identify limitations of, or otherwise polish a given product without generating new content.

Summarize refers to the submission of existing content into an AI application to shorten, reword, digest, or otherwise rearticulate that content.

Prohibited AI Usage

C4R Materials are published under a CC-BY license. Our materials are created without the use of AI in the following ways and we request that others do the same when using our materials.

- Analyzing user-generated content and data containing personally identifiable information.

- Generating C4R promotional materials.

- Generating C4R unit content.

- Generating examples submitted to CENTER by METER grant recipients.

- Generating or altering images and art assets.

- Generating scientific figures.

- Generating surveys or other user research materials.

- Generating writing submitted to CENTER by METER grant recipients.

- Summarizing work submitted to CENTER by METER grant recipients.

Permitted AI Usage

Most C4R work does not involve the use of AI. When AI is used, we use it in the following ways and encourage thoughtful validation and investigation of AI application outputs in those cases. We strongly discourage any and all C4R content to be used for AI model training purposes.

- Altering interactive activities.

- Analyzing user-generated content and data not containing personally identifiable information.

- Drafting an outline.

- Extracting information from figures.

- Generating accessibility measures (e.g. closed captions).

- Generating fictitious examples used in educational content

- Generating intentionally flawed or poor examples of scientific work.

- Generating prototypes for interactive activities.

- Partnering on outlining ideas and content.

- Partnering on searches for previously published examples and relevant scientific literature.

- Post-production of ideas and content.

- Summarizing work created by CENTER.

Third Party Contractors

When CENTER, METERs, or any other members or entities in C4R engage with third party contractors for any work (including but not limited to graphic design work, development of educational materials, building unit activities), all third party contractors must be informed of C4R’s AI use policy and follow the policy. It is the responsibility of the contracting entity within C4R to ensure the AI policy is being followed. Contracting entities determined to violate the AI policy will be dismissed as soon as possible and implicated products will not be used.

Using AI with Public C4R Materials

C4R materials are created without the use of AI in the ways listed above, and we request that any modification or reuse of our materials follow these policies. We ask that uses of AI in reuse or modification of C4R materials be disclosed so that audiences can appropriately evaluate the credibility of the work shown. A standard disclosure statement is included at the end of this document and is available on all C4R units.

Novel Cases

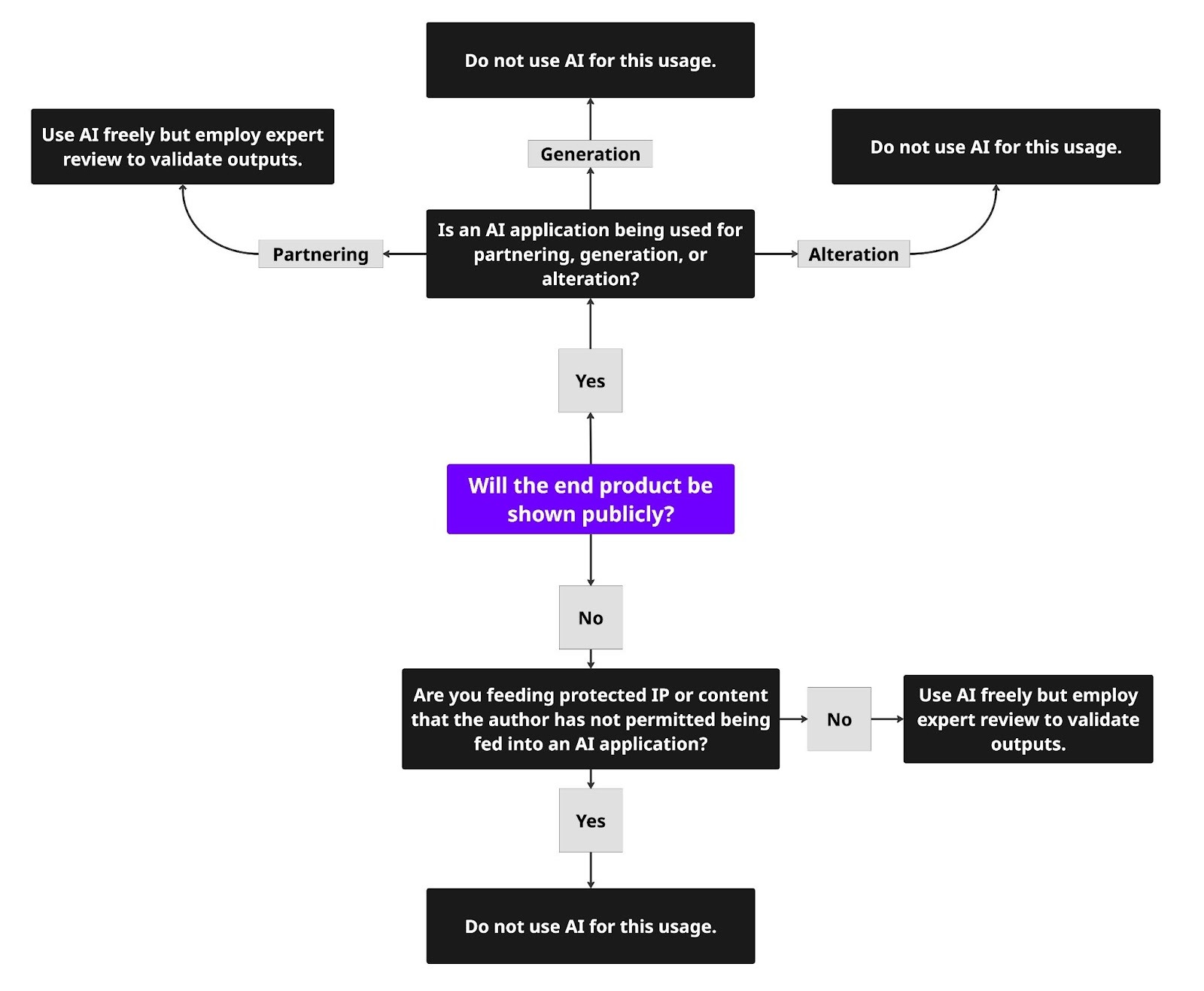

AI technologies are constantly evolving and may outpace the use cases described above. Please refer to the following flowchart to determine whether an AI use case is appropriate.

Transparency in AI usage

The C4R website and all units will have a disclosure detailing how and why AI was used in different parts of the unit development process, seen and approved by the METER author and CENTER, or NINDS partner if the METER is no longer active grant participant, as well as a link to the final draft of this AI policy.

AI Usage Disclosure

The Community for Rigor uses AI applications in restricted ways in our work. AI is not used to generate images, figures, content, promotional materials, or in any user research context that includes personally identifiable information. AI is used to search for and summarize literature, to outline content, to create and edit code, to create intentionally flawed examples, to extract information from figures, and to generate accessibility measures.